Chip industry’s complicated contours decades in the making

The mixture of happenstance and geostrategy that helped make a small European country key to the global semiconductor market is depicted in delicious detail in Chris Miller’s “Chip War.” The book couldn’t have been timed better.

Miller, an associate professor of international history at the Fletcher School, uses a colorful cast of characters to tell the story of a truly pivotal industry’s formation, and explain why altering it in a meaningful way seems unlikely any time soon – regardless of mounting geopolitical pressure.

Chips are coveted not least for the role they play in artificial intelligence tools seemingly poised to shake things up for just about everyone. The more we want them, though, the more expensive and difficult they are to make. It’s all gotten very complicated.

Take the Dutch niche in the supply chain, for example – it’s based on one company’s machine “that took tens of billions of dollars and several decades to develop,” Miller writes, and uses light to print patterns on silicon by deploying lasers that can hit 50 million tin drops per second.

It’s an industry full of such mind-bending extremes.

In an interview with the Forum’s Radio Davos podcast, Miller marvelled at having recently visited a facility in the US being built with “seventh-biggest crane that exists in the world,” which will eventually assemble chips mounted with transistors “roughly the size of a coronavirus.”

Nvidia, the company now most closely identified with chips powering artificial intelligence, features prominently in Miller’s book. The company traces its roots to a meeting at a 24-hour diner on the fringes of Silicon Valley, he writes. At a certain point it realised that its semiconductors used for video-game graphics could do a good job of training AI systems. Earlier this year, its market value increased by $184 billion in a single day.

Nvidia’s chips aren’t made anywhere near Silicon Valley, though. Like most advanced semiconductors they’re produced by another company, TSMC, at a facility in Taiwan, China that Miller describes as “most expensive factory in the world.”

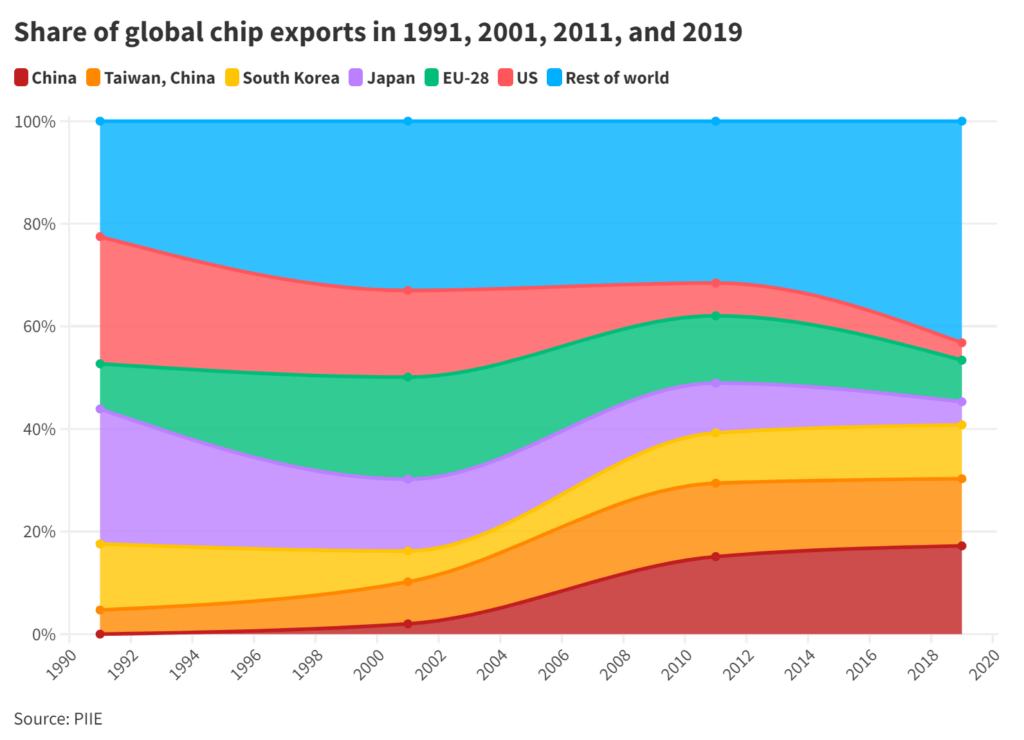

In fact, US chip production in general has declined sharply in recent decades.

Instead, the country has focused on research and design, while relying on links with East Asia and the Netherlands for other elements. But those links risk becoming “choke points,” as Miller describes them, if they’re disrupted by conflict or a natural disaster (it’s not just the plot of a 1980s James Bond film; Miller noted in his Radio Davos interview that an unsettling amount of the industry is located in places relatively prone to earthquakes).

These hazards, and global competition that’s formed harder edges of late, have fueled efforts to build chip resilience through greater independence.

The ongoing race to gain an edge in chips

That massive crane Miller mentioned is being put to work in the state of Arizona, which may be a key part of a current US government effort to “win the race for the 21st century” through semiconductor manufacturing.

The EU has its own initiative designed to strengthen chip competitiveness and resilience.

And a proposed, $20 billion effort to build India’s first semiconductor factory (or “fab,” in industry lingo) recently fell through when a key partner backed out.

In his Radio Davos interview, Miller said the daunting size of the previously planned investment in India is about standard for any new, fully-fledged manufacturing facility. Critics of what the US spends on its military might like to know that “making semiconductors is so expensive that even the Pentagon can’t afford to do it in-house,” he writes.

Sharing the considerable financial burden of making chips was long ago deemed necessary. Research in one country, building elaborate lithography tools in another, manufacturing in another, and finally assembling in yet another. The system works in good times; in less-good times it seems problematic.

The shape of the industry was no accident, though

“Microelectronics is a mechanical brain,” Soviet leader Nikita Khrushchev pronounced in the depths of the Cold War, according to Miller’s book, “It is our future.” Khrushchev was right, but maybe not exactly in the way he would have liked.

At that time, the US was only about four years ahead of the Soviets in chip technology, as the industry’s earliest companies like Fairchild Semiconductor and Texas Instruments focused on space exploration and nuclear weapons.

Once those firms tapped into the vast American consumer market via electronics, the rest was history, Miller writes. An arms race with nuclear warheads was one thing, a race to cram millions of transistors onto a single chip was another. The Soviets fell behind, and Asia came to the fore.

Fairchild began sending its chips to Hong Kong SAR for assembly in the early 1960s. A couple of decades after that, a one-time English literature student named Morris Chang founded TSMC in Taiwan, China. The company now churns out roughly 90% of the world’s advanced chips, and has recently been filing a sizeable portion of global semiconductor patent applications.

Having the right chips or not can make a big difference in a technology market, or on a battlefield.

But, as Miller notes, going it alone in such an expensive and complex industry has never worked. It’s unclear whether forming distinct, competing supply chains would be much better.

One of the most compelling points Miller makes is that among the many things about chips we take for granted, the biggest might be the mind-blowing increases in computing power they give us year after year.

But there’s no guarantee that will continue. Moore’s Law, which long ago posited that the power crammed onto a single chip would double about every two years, has so far proven resilient. But it isn’t really a law – it’s just an educated guess.